Explainable AI (XAI) represents a huge shift in how humans use smart machines. Currently, many models act like mysterious boxes that produce results without any clear logic. Consequently, this lack of clarity creates doubt in fields like medicine and finance. XAI solves this problem by providing a clear view of the decision-making process. Therefore, these methods ensure that every result is easy to understand for every single user.

As the demand for smart systems grows, the need for skilled workers remains very high. For this reason, many people look for an Artificial Intelligence Online Course in India today. Understanding the inner logic of models is now just as vital as building them. Furthermore, XAI focuses on fairness and safety in every single step of the calculation. This approach changes complex math into simple insights to build much better organisational trust.

Definition of Explainable AI (XAI)

To begin, Explainable AI is a set of tools that help people trust machine results. It refers to frameworks that make the internal logic of a model very easy to see. Instead of just getting a result, users see the specific facts that changed the outcome. Thus, this transparency is vital for fixing errors and ensuring the system has no bias.

The Inner Workings of Explainable AI Systems

Additionally, XAI works by adding a layer of simple logic between the model and the person.

The Vital Importance of Clarity in AI

Moreover, modern systems often make life-changing choices about loans and health. Without a clear explanation, a biased model could cause a lot of harm to people.

Core Pillars and Principles of XAI

In addition, effective XAI relies on four main pillars to keep the information truly useful.

Technical Methods for Achieving Model Interpretability

On the other hand, techniques change based on when the explanation happens during the process.

Categorising Different XAI Model Types

There are also two main ways to group these different explanation methods today.

Strategic Advantages of Transparent AI Models

Furthermore, using XAI leads to much stronger and more ethical tech solutions for everyone.

Implementing the LIME Framework within Python

Next, we look at the LIME framework, which is a very popular tool for Python. It works by changing the input data slightly to see how the results change. Consequently, this helps create a very simple model to explain one specific data point.

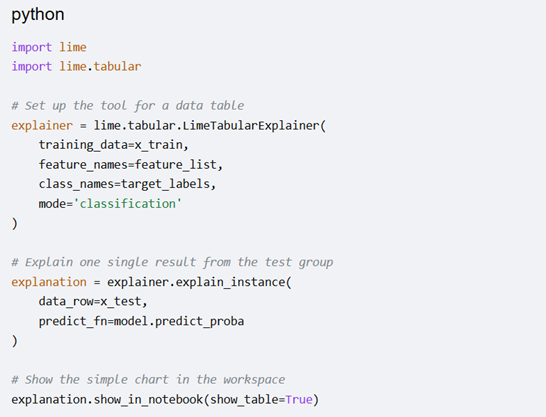

Below is a conceptual example of how to implement this using Python libraries.

In short, this code shows which facts pushed the model toward a certain class. It provides a visual list of the weight for each fact used in the math. Therefore, using these tools ensures the path from data to choice is always clear. Learning these libraries is now a basic part of all modern technical training.

conclusion

Ultimately, the growth of smart machines depends on the total clarity of the logic used. By moving away from hidden systems, the tech world builds a safer space for everyone. XAI methods provide the tools needed to check, fix, and trust every digital choice. In conclusion, embracing openness ensures that tech helps people while staying very accurate and fair.

As the demand for smart systems grows, the need for skilled workers remains very high. For this reason, many people look for an Artificial Intelligence Online Course in India today. Understanding the inner logic of models is now just as vital as building them. Furthermore, XAI focuses on fairness and safety in every single step of the calculation. This approach changes complex math into simple insights to build much better organisational trust.

Definition of Explainable AI (XAI)

To begin, Explainable AI is a set of tools that help people trust machine results. It refers to frameworks that make the internal logic of a model very easy to see. Instead of just getting a result, users see the specific facts that changed the outcome. Thus, this transparency is vital for fixing errors and ensuring the system has no bias.

The Inner Workings of Explainable AI Systems

Additionally, XAI works by adding a layer of simple logic between the model and the person.

- First, it checks which input facts have the most impact on the final result.

- Next, the system creates simple versions of complex models to explain specific data points.

- Also, visual charts show the link between the input data and the final scores.

- Then, math techniques calculate the weight of each variable during the prediction stage.

- Finally, the process turns high-level math into simple words or very clear visual maps.

The Vital Importance of Clarity in AI

Moreover, modern systems often make life-changing choices about loans and health. Without a clear explanation, a biased model could cause a lot of harm to people.

- For instance, XAI helps workers find errors in the data or the model setup.

- Because of this, legal groups require clarity to follow global data privacy laws.

- Similarly, companies use these tools to build trust with a very wide customer base.

- Furthermore, it allows experts to check that the logic matches real-world common sense.

- Meanwhile, students in an AI Course in Gurgaon study XAI to make models more reliable.

Core Pillars and Principles of XAI

In addition, effective XAI relies on four main pillars to keep the information truly useful.

- Meaning: Above all, the explanation must be simple for the person reading it.

- Truth: The description must accurately show the exact path the model actually took.

- Limits: The system should say when it lacks enough data to give a safe answer.

- Openness: Every step of the data journey must be open for a full review.

Technical Methods for Achieving Model Interpretability

On the other hand, techniques change based on when the explanation happens during the process.

- Feature Weight: This shows which facts mattered most for one specific choice.

- Effect Plots: These show the average impact of one fact on the final guess.

- Heat Maps: Visual tools highlight parts of a photo that caused a specific label.

- Simple Models: A basic model copies the behaviour of a much more complex one.

Categorising Different XAI Model Types

There are also two main ways to group these different explanation methods today.

- Global Views: These describe the general logic of the entire machine learning model.

- Local Views: These focus on why the model made one specific, single choice.

- General Tools: These tools work on any model regardless of the internal design.

- Specific Tools: These methods only work for certain designs, like deep neural networks.

Strategic Advantages of Transparent AI Models

Furthermore, using XAI leads to much stronger and more ethical tech solutions for everyone.

- It reduces the risk of unfair treatment for specific groups of diverse people.

- As a result, teams can find and fix performance issues much faster than before.

- Likewise, users feel safer following the advice given by a very clear system.

- Also, it helps bridge the gap between tech experts and the general public.

- Finally, learners in an AI Course in Noida use these tools to lead better projects.

Implementing the LIME Framework within Python

Next, we look at the LIME framework, which is a very popular tool for Python. It works by changing the input data slightly to see how the results change. Consequently, this helps create a very simple model to explain one specific data point.

| Component | Function in LIME |

| Perturbation | Changing input values slightly to observe output shifts |

| Similarity Kernel | Weighting the new samples based on proximity to the original |

| Local Model | Fitting a simple regressor to explain the local area |

Below is a conceptual example of how to implement this using Python libraries.

In short, this code shows which facts pushed the model toward a certain class. It provides a visual list of the weight for each fact used in the math. Therefore, using these tools ensures the path from data to choice is always clear. Learning these libraries is now a basic part of all modern technical training.

conclusion

Ultimately, the growth of smart machines depends on the total clarity of the logic used. By moving away from hidden systems, the tech world builds a safer space for everyone. XAI methods provide the tools needed to check, fix, and trust every digital choice. In conclusion, embracing openness ensures that tech helps people while staying very accurate and fair.